MCP server for interacting with Prometheus metrics and data.

This is a TypeScript-based MCP server that implements a Prometheus API interface. It provides a bridge between Claude and your Prometheus server through the Model Context Protocol (MCP).

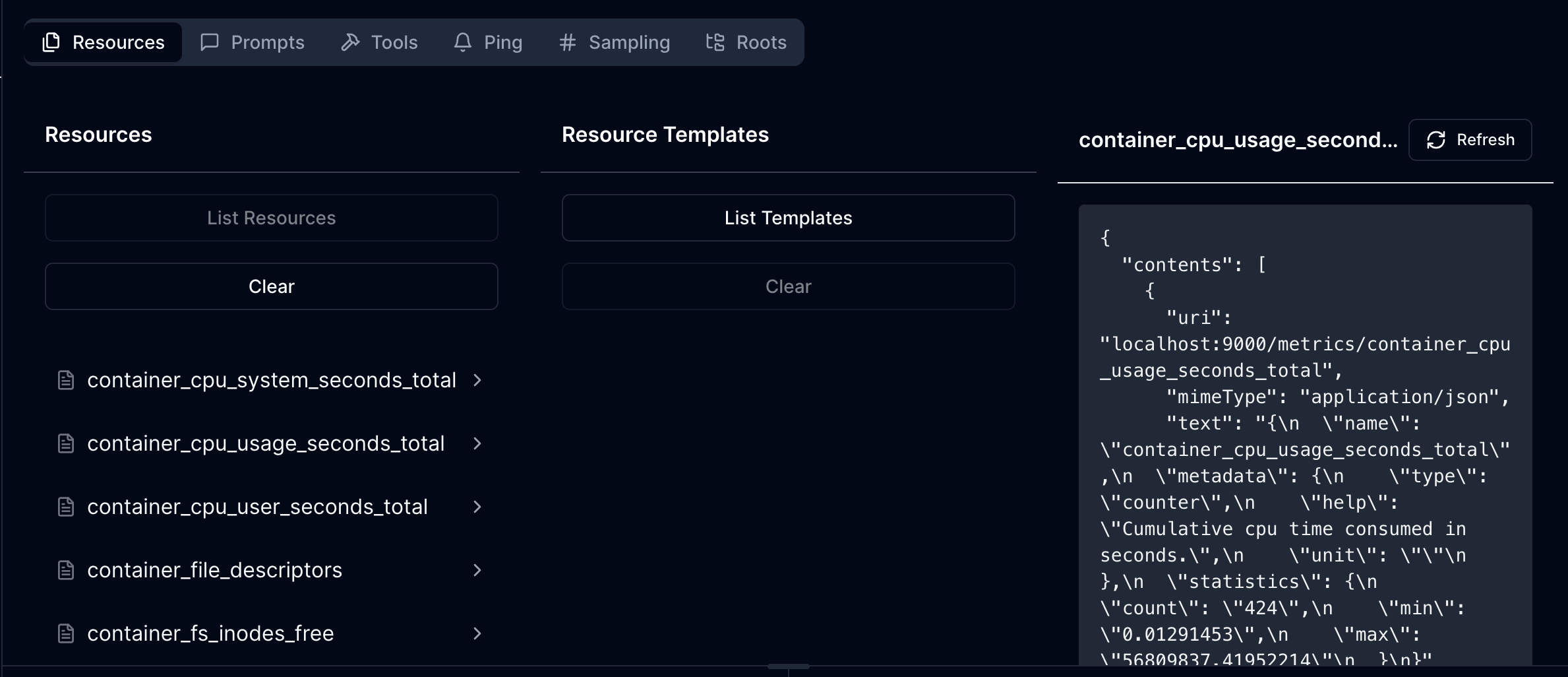

- List and access Prometheus metric schema

- Each metric resource provides:

- Metric name and description

- Detailed metadata from Prometheus

- Statistical information (count, min, max)

- JSON mime type for structured data access

- List all available Prometheus metrics with descriptions

- Read detailed metric information including:

- Metadata and help text

- Current statistical data (count, min, max values)

- Basic authentication support for secured Prometheus instances

The server requires the following environment variable:

PROMETHEUS_URL: The base URL of your Prometheus instance

Optional authentication configuration:

PROMETHEUS_USERNAME: Username for basic auth (if required)PROMETHEUS_PASSWORD: Password for basic auth (if required)

Install dependencies:

npm installBuild the server:

npm run buildFor development with auto-rebuild:

npm run watchTo use with Claude Desktop, add the server config:

On MacOS: ~/Library/Application Support/Claude/claude_desktop_config.json

On Windows: %APPDATA%/Claude/claude_desktop_config.json

{

"mcpServers": {

"mcp-server-prometheus": {

"command": "/path/to/mcp-server-prometheus/build/index.js",

"env": {

"PROMETHEUS_URL": "http://your-prometheus-instance:9090"

}

}

}

}Since MCP servers communicate over stdio, debugging can be challenging. We recommend using the MCP Inspector:

npm run inspectorThe Inspector will provide a URL to access debugging tools in your browser.

The server exposes Prometheus metrics through the following URI structure:

- Base URI:

http://your-prometheus-instance:9090 - Metric URIs:

http://your-prometheus-instance:9090/metrics/{metric_name}

Each metric resource returns JSON data containing:

- Metric name

- Metadata (help text, type)

- Current statistics (count, min, max)